Exemplar Guide

Getting Started

- Start the virtual machine with configuration file vm/I-EPOS.ovf and virtual disk vm/I-EPOS.vmdk.

If you cannot start the virtual machine, try using Oracle VirtualBox. It was tested in Windows 10 and Mac OS Sierra. - Login to user “I-EPOS VM” with password “iepos”

- Now let us try to execute the program.

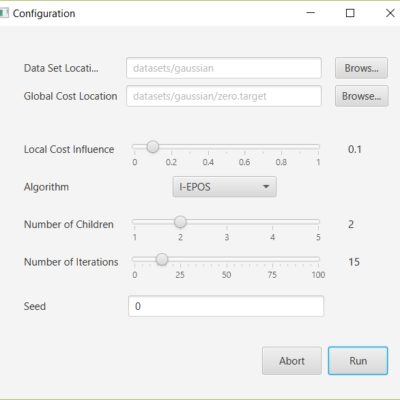

Start the file “I-EPOS” on the homescreen with a double click. - The configuration window opens. This is the place where the simulation can be configured. It should look as follows:

- Click the “Run” button to start the simulation.

- After a few seconds the result window should appear, showing the results of the simulation. More about this later. The window should look as follows:

- Click on the “Next” button in order to view the results of the second iteration. The bottom part of the result window should change. The window should now look as follows:

Step-by-Step Instructions

The instructions assume that the VM is started, the user is logged in and is on the homescreen as described in Getting Started.

Scenario 1

We consider a bicycle sharing system implemented in a city. Stations are set up all across the city each with bicycles and some free parking slots. As users drive across the city they move bicycles between the different stations. We notice that over time some stations lack of bicycles, while others are running out of parking capacity. We solve this problem with I-EPOS.

- Start I-EPOS from the homescreen.

- Select the dataset by clicking the “Browse” button to right of “Data Set Location”, and selecting the directory “bicycle”.

- We balance the bicycle load accross the stations by minimizing to zero or close to zero the changes in the number of bicycles stored in each station. Therefore, we leave the “Global Cost Location” at “zero.target”. I-EPOS will try to minimize the squared distance between the global cost and the target, that is the zero vector in this case.

- For now we are only interested in minimizing the global cost, so please set the “Local Cost Influence” to 0.

- The other parameters should be left as they are. They specify that we want to execute I-EPOS in a binary tree over 15 iterations, which is sufficient for the algorithm to converge for this example. The “Seed” defines the seed for the random number generator used by I-EPOS. Choosing a different value would result in slightly different results.

- Click the “Run” button to start the simulation.

- While I-EPOS is executing, it tries to find combinations of user plans that make the total change in the number of bikes (referred to as global response) as small as possible. When the result window opens, it shows in the top section how the algorithm managed to reduce the global cost from its initial guess over a course of multiple iterations. The initial guess is already quite a lot better than the solution we get if we would let users freely choose bicycle stations. In this case the global cost would be nearly 4000. In the following iterations the global cost is quickly reduced and reaches its minimum after finishing iteration 12.On the bottom left we see the global response after the first iteration. Each dimension in this plot represents the change in the number of bikes for one bicycle station over a 2 hour time frame. The initial guess of the algorithm shows that a station is missing 16 bikes after 2 hours of users leaving from or arriving at the station. If users could travel however they liked, the largest change would be over 50 bikes.On the bottom right we see the network of agents. Each agent that changed its selection compared to the previous iteration is marked with the color black. Since this is the state after the first iteration, all agents had to select some initial plan and therefore changed their selection.

- Navigate through the iterations using the buttons “Next” and “Previous” to see how I-EPOS manages to improve the global response step by step. Notice how the global response gets gradually more stable over the iterations. In the end I-EPOS is able to stabilize all stations so far that they loose or gain one bicycle at most, compared to the 16 in the first iteration.In the first few iterations we also see how I-EPOS ensures monotonic improvement of the global cost – by limiting the change to a subset of agents.

- Close the report window, so that you are back at the homescreen.

Scenario 2

We notice that the users may not prefer the suggestions I-EPOS makes. If the users could freely choose their plans, the global response would be as follows:

In order to make the system more appealing to users, we configure I-EPOS to take their preferences into account, encoded in the local cost.

- Start I-EPOS from the homescreen.

- Select the bicycle dataset again as explained above.

- Set the “Local Cost Influence” to 0.9

- Click the “Run” button to start the simulation.

- I-EPOS now needs less iterations to reduce the global cost (top), since the local influence to the plan selection process limits the flexibility of the algorithm in terms of global cost minimzation. The average local cost of all agents is also plotted as a dashed line. With about 0.004 it is nearly optimal. Due to the fact that the users in the dataset can have multiple plans with minimal local cost, I-EPOS still has enough freedom to greatly reduce the global cost.The global response (bottom left) is already pretty similar to the user preferred result shown above. However, I-EPOS manages to turn this initial solution that many users like into a solution with much lower global cost without sacrifycing on the local cost.

- Navigate through the iterations again using the buttons “Next” and “Previous” to see how I-EPOS manages to improve the global response step by step. Notice that the global response is not as stable as it was in our previous simulation. Observe that I-EPOS mainly reduces the large spikes in the user preferred response (shown above) that have the largest effect on the global cost.

Scenario 3

Now assume that users have an app that provides recommendations which bicycle stations they should use. However, not all of our users make use of the app. We want to use I-EPOS to let all participating users help us to stabilize traffic from non-participating users.

- Start I-EPOS from the homescreen.

- Select the bicycle dataset again as explained in point A.

- Select the target by clicking the “Browse” button to right of “Global Cost Location”, and selecting the file “incentive.target”. This target describes that we want the left half of the stations to lose 5 bikes and the right half of the stations to receive 5 bikes over a 2 hour period. This should correct all bicycle moves made by non-participating users.

- Set the “Local Cost Influence” to 0.5 . Usually we would of course choose 0.9 again. However, with a reduced local cost influence the results of this scenario are more apparent.

- Click the “Run” button to start the simulation.

- This scenario now shows high negative values of bicycles in the first half of the stations on the left, while the right half of the stations show highly positive values. The longer convergence period of this scenario shown in the global cost plot (top) indicates that matching the target is challenging in this case.

Live deployment

An implementation of I-EPOS for live deployment in which agents run in parallel as seprate processes is available in the following branch:https://github.com/epournaras/EPOS/tree/LiveExperiment. Note that this implementation is functional, yet experimental and it is work-in-progress to fully align it with simulations performed by the main software.

In order to execute it, Oracle Java 8 has to be installed. It is at the moment only executable via commandline:

cd experimental

java -jar IEPOS.jar

Troubleshooting

If you need help in using or extending I-EPOS, feel free to contact us.

| Evangelos Pournaras | epournaras@ethz.ch |

| Peter Pilgerstorfer | peter.pilgerstorfer@outlook.com |